-

register an account at aicrowd.com

-

register an account at gitlab.aicrowd.com (so that you can push/pull repositories from/to it). instead of registering from scratch, they allow you to authorize with your aicrowd account(recommended).

-

generate a new SSH key pair for gitlab.aicrowd.com. if you did this in the past (for GitHub or other stuff), you may use the public key you already generated before. otherwise, generate one with

ssh-keygen -t ed25519on a linux machine.

here’s the output i got from the command:

user@ubuntu:~$ ssh-keygen -t ed25519

Generating public/private ed25519 key pair.

Enter file in which to save the key (/home/user/.ssh/id_ed25519):[press enter]

Enter passphrase (empty for no passphrase):[leave empty, press enter]

Enter same passphrase again:[press enter]

Your identification has been saved in /home/user/.ssh/id_ed25519.

Your public key has been saved in /home/user/.ssh/id_ed25519.pub.

The key fingerprint is:

SHA256:qGBFzFBNDP9s1YcC6RncriUNgRFg+53Ji6DIw3j**** user@ubuntu

The key's randomart image is:

+--[ED25519 256]--+

| .=**+=o+ |

| ooo+ =... . |

| o .. *o o . |

| . . *+++. . |

| o . o S+ |

****

+----[SHA256]-----+

user@ubuntu:~$

to see your generated public key, enter cat .ssh/id_ed25519.pub. the output should look like ssh-ed25519 AAAAC3NzaC1lZDI1NTE5AAAAIDHApZr8FPpCfcFlQiW4HXS6TNaplXjDgWd2xNDsxeXV

now goto https://gitlab.aicrowd.com/profile/keys and paste the content of id_ed25519.pub into the big textbox. press Add Key and you’re done.

In case you don’t understand this step: in public key cryptography, if you generate a private-public key pair(one private, one public, as a pair) and give the public key to a third-party (in this case gitlab.aicrowd(dot)com), then encrypt a message using the private key (which only you have), then the third-party can decrypt your message with your public key and be confident that the message was sent by you and you only (since only you have the private key). This relies on the property that although a public key can be used to decrypt a private key encrypted message, it’s almost impossible to infer the private key from the public key, so you don’t have to worry about your public key being leaked enabling someone to imitate you.

To test whether gitlab.aicrowd.com recognizes you via ssh, enter ssh -T git@gitlab.aicrowd.com. if everything’s good you should see Welcome to GitLab, @Username! in the output.

ssh by default uses the .ssh/id_xxx key pairs, that’s why we don’t have to specify them in the command above.

For more on this please refer to https://gitlab.aicrowd.com/help/ssh/README#generating-a-new-ssh-key-pair

- Clone the Flatland repository to your local machine.

git clone git@gitlab.aicrowd.com:flatland/flatland.git

above command will fail if you don’t have the correct key pair on your machine.

I do most of my development on Windows (because i’m not a pure programmer, a lot of my work consists of proprietary software), so i have to copy the key pair files generated within ubuntu onto my windows machine.

simply copy ~/.ssh/id_* to %HOME%/.ssh and you’re good to go.

Side note: Git for windows locate your key pairs via the HOME environment variable(hence the %HOME%), so actual path may vary. To change the value of HOME, edit the value of HOME in Edit Environment Variables.

Side note 2: maybe I shouldn’t use Windows for this project at all. We’ll see.

- Install the repository as a python module. because the repository updates itself quite frequently, you should

pip install -e flatlandwhere-estands foreditable(instead of copying everything intosite-packages) such that agit pullonflatlandwill update the code immediately without reinstallation.

pip will download and install all the dependencies. use a proxy (set https_proxy=http://blahblah:hehe) if you’re in China. use patience otherwise.

‘all the dependencies’ means a LOT of pypi packages(this is a research grade project). You may want to use virtualenv to avoid polluting your python workspace.

- type

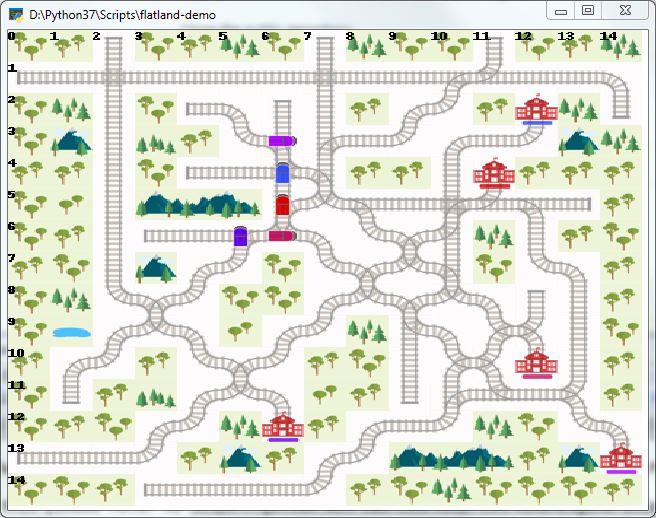

flatland-demoin your terminal. if everything went well you should see a bunch of trains moving in a grid world.

aside from the aspect ratio and the very low FPS, everything seemed fine.

(to be continued)