Round 1 has finished! Here are the winners of this first round

Round 1 has finished! Here are the winners of this first round

RL solutions:

-

Team MARMot-Lab-NUS with -0.611

Team MARMot-Lab-NUS with -0.611 -

Team JBR_HSE with -0.635

Team JBR_HSE with -0.635 -

Team BlueSky with -0.852

Team BlueSky with -0.852

Other solutions:

-

Team An_Old_Driver with -0.104

Team An_Old_Driver with -0.104 -

Team MasterFlatland with -0.107

Team MasterFlatland with -0.107 -

Participant Zain with -0.116

Participant Zain with -0.116

Congratulations to all of them!

The competition is only getting started: anyone can still join the competition (Round 1 was not qualifying), and the prizes will be granted based on the results of Round 2.

When will Round 2 start? Can I still submit right now?

When will Round 2 start? Can I still submit right now?

We are still hard at work on Round 2, which is expected to start sometimes this week. In the meantime, you can keep submitting to Round 1 to try out new ideas.

Now that Round 1 has officially finished, the leaderboard is “frozen”, and the winners listed above will keep their Round 1 positions whatever happens. But you can still see how your new submissions would rank by enabling the “Show post-challenge submissions” filter on the leaderboard:

Problem Statement in Round 2

Problem Statement in Round 2

In Round 1, your submissions had to solve a fixed number of environments within and 8 hours time limit.

In Round 2, things are a bit different: your submission will have to solve as many environments as possible in 8 hours. There are enough environments so that even the fastest of solutions couldn’t solve them all in 8 hours (and if that would happen, we’d just generate more).

The environments start very small, and have increasingly larger sizes. The evaluation stops if the percentage of agents reaching their targets drops below 25% (averaged over 10 episodes), or after 8h, whichever comes first. Each solved environment awards you points, and the goal is to get as many points as possible.

As in Round 1, the environment specifications will be publicly accessible.

This means that the challenge will not only be to find the best solutions possible, but also to find solutions quickly. This is consistent with the business requirements of railway companies: it’s very important for them to be able to re-route trains as fast as possible when a malfunction occurs!

Optimized Flatland environment

Optimized Flatland environment

One of the most common frustration in Round 1 was the speed of the environment.

We have implemented a number of performance improvements. The pip package will be updated soon. You can already try them out by installing Flatland from source (master branch):

pip install git+https://gitlab.aicrowd.com/flatland/flatland.git

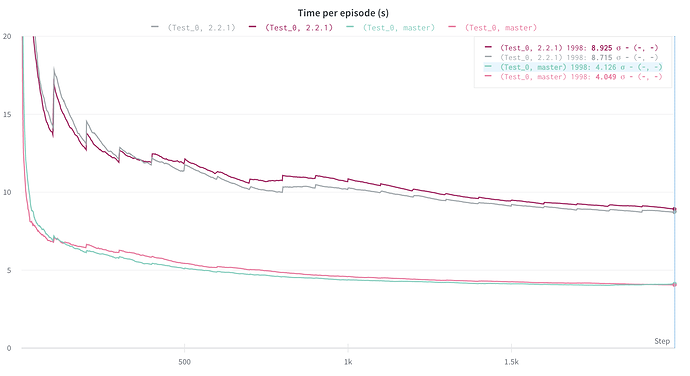

The improvements are especially noticeable in smaller environments. Here’s for example the time per episode while training a DQN agent in Test_0, using pip release 2.2.1 vs the current master branch:

(using DQN training code from here: https://gitlab.aicrowd.com/flatland/flatland-examples)

Train Close Following

Train Close Following

As some of you have noticed during Round 1, the current version of Flatland makes it hard to move trains too close from one another. You usually need to keep an empty cell between two trains, or to take their ID into account to make sure they can follow each other closely.

This limitation has been lifted. The new motion system is also available in the master branch. See here for a detailed explanation of what it means, how it can help you, and how it was implemented: https://discourse.aicrowd.com/t/train-close-following

More coming soon…

More coming soon…